What Happens When the New Team Member is AI?

Ahmed Rezika, SimpleWays OU

Posted 5/7/2026

Let’s welcome our new colleague

Not a contractor or part-time freelancer.

Not an intern.

Not an expert, a consultant or lead.

A robot.

That may sound futuristic, yet AI agents already support planning, diagnosis, and decisions. Physical collaboration may not be far behind. The idea is no longer science fiction. It is already being discussed in industrial forums such as the Hannover Messe panel Bringing Humanoids to Production [1], where panelists from NVIDIA, BMW, Microsoft, and Hexagon explored the topic. We are not raising a distant hypothetical. We are examining a practical communication challenge that may intensify as the idea of the robot colleague—or “humanoid,” as the term is increasingly used— move from discussion into operations.

Now imagine speaking to this colleague the way people often speak to each other.

“Looks fine for now.”

“Keep an eye on that pump.”

“Don’t you see how bright the workshop is.”

A human may detect irony, context, or implied concern. A robot may take every word literally. That difference matters. Misread meaning can distort action, lower standards, and introduce risk. A vague instruction can confuse a machine. Yet the same vague instruction can also confuse a technician, a planner, or the next shift.

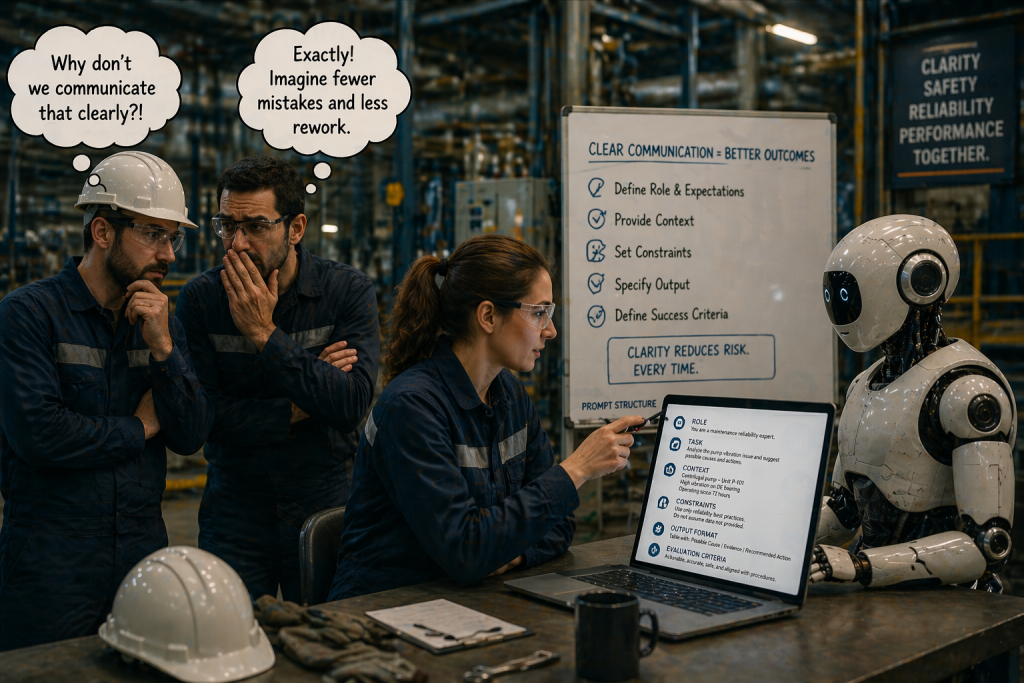

This raises a harder question. If we know we must communicate clearly with a machine, why not demand the same clarity with each other?

Maintenance teams have always pursued clear handoffs, precise job plans, and shared understanding. Project managers have done the same. Prompt engineering simply gave this old discipline a new spotlight.

Communication is the bridge between different realms of thinking. Those communication bridges are sometimes burnt between humans in the same team and across teams. And bridges fail when clarity is weak. We may collaborate hand in hand, co-locate, yet exist in different realms. Communication is always the bridge between them. That is why communication clarity is not soft skill polish. It is a reliability discipline.

Onboarding a Robot Teammate Starts Where Human Onboarding Always Has

When a new technician joins a maintenance team, the first concern is rarely speed, output, or productivity. It is safety. Before a new person touches critical equipment, the team establishes boundaries. Hazards are reviewed. Isolation procedures are explained. Permits, escalation paths, and what must never be improvised are made clear. No one assumes competence simply because a person arrives with credentials. Understanding is validated before responsibility expands.

Safety Comes Before Capability

That is not distrust. That is responsible onboarding.

A robot colleague, or an autonomous digital assistant embedded in maintenance work, would demand the same discipline. Before asking such a system to support diagnosis, recommend actions, or influence decisions, the team would define operating limits. What information may it use? What decisions may it support but not make? What conditions require human escalation? What actions remain prohibited no matter how confident the system appears? These questions resemble constraints and validation checks in structured prompting, yet they also resemble how maintenance has always approached safe work.

That comparison exposes an uncomfortable truth. If we believe a robot colleague should begin inside carefully defined safety boundaries, why do human teams sometimes leave expectations vague for new hires? How often do people receive access, broad instructions, and then learn real boundaries only after mistakes? That is not clarity. That is drift disguised as onboarding.

Whether human or machine, safe collaboration begins when limits are explicit. In fact, the discipline needed to safely introduce a robot colleague may remind us how much stronger human onboarding could become when communication is equally deliberate.

Capability Is Discovered Through Guided Work

Good onboarding does not end with orientation. It tests capability in practice.

A new technician often starts with pilot jobs, low-risk assignments, supervised inspections, or paired work alongside experienced colleagues. The goal is not simply to watch whether tasks are completed. The goal is to observe judgment, habits, communication, and response under real conditions. Trust grows through exposure.

This process is not far from how one would responsibly onboard a robot colleague. No prudent team would grant broad authority on day one. It would likely begin with narrow support tasks. Interpret vibration data. Draft inspection routes. Flag anomalies. Suggest possible causes, but do not act independently. Then the system would be tested. Its outputs would be compared. Its reasoning challenged. Its behavior observed across scenarios, crews, and conditions.

In effect, capability would be explored the same way it is explored in a new human teammate: through guided work, pilot assignments, feedback, and iteration.

That realization matters because strong teams do not merely assign tasks. They discover where each member adds strength. One person may excel in troubleshooting. Another in precision tasks. Another in planning. Another in calm response under pressure. As those strengths become visible, burdens can be shared. Teams can shoulder tasks that once felt heavy for one person alone.

This is where the robot colleague idea becomes practical rather than symbolic. Properly onboarded, such a system might eventually take analytical burdens, repetitive burdens, or information burdens that weigh on people. Yet this only works if capability is observed, not assumed.

And again the lesson turns back toward humans. If we would validate and grow trust carefully with a machine, why would we rush trust-building with people? Why do teams sometimes expect collaboration before they have built shared understanding?

Often, friction does not arise because people lack skill. It arises because capability has not been explored clearly enough for trust to form.

That is a communication issue.

And onboarding is where it begins.

Shared Expectations Turn Assistance into Collaboration

The deepest part of onboarding is not safety. It is not even capability. It is expectation.

Teams carry expectations about quality, ownership, response, and what “done right” means. A new colleague learns not only how to perform tasks, but how the team defines good work. That is cultural onboarding.

It is also what allows collaboration to scale.

If I know your skill set, your judgment, and your standards, I can hand off difficult work with confidence. I can trust you with tasks that once sat heavily on my shoulders. That is how teams reduce individual burden and increase collective resilience.

A robot colleague would require the same expectation-setting. What performance level is acceptable? When should it ask questions rather than assume? When should it refuse uncertain instructions? When should it escalate disagreement? What level of evidence should support a recommendation?

These are expectation questions.

And expectation questions are communication questions.

This is where onboarding and prompt engineering begin to converge. A prompt, at its best, is a statement of expectations. And onboarding, at its best, is a long-form prompt teaching a colleague how to participate reliably in shared work.

Seen this way, onboarding a robot colleague does not introduce a foreign discipline. It simply makes explicit what strong teams often do implicitly. State boundaries. Test capability. Build trust. Define expectations. Refine through feedback.

That sequence would strengthen human interactions as much as machine collaboration.

Many team tensions do not come from bad intent. They come from unclear expectations left unspoken. Burnt communication bridges often begin there. Better onboarding can prevent some of that before it starts.

What a Robot Colleague Teaches About Communication Clarity

Recent prompt engineering methods such as STEM, CREATE, and ABCD all aim to improve how people communicate with AI agents. They encourage users to define context, state intent, set boundaries, and describe expected outcomes. In short, they reduce ambiguity.

That sounds modern. Yet the principle is not new. Maintenance teams have relied on similar discipline for years. A sound job plan defines scope, identifies conditions, sets constraints, and describes what success looks like. A permit does the same. A handoff does the same. Even a well-written work order acts as a prompt designed to guide reliable action.

The logic is shared. Unclear prompts can trigger hallucination in an AI system. Unclear instructions can trigger confusion in a maintenance task. Different systems, same pattern. This is not a software problem. It is a communication problem.

Psychology has long warned that people fill information gaps with assumptions. Daniel Kahneman [2] showed how fast thinking often substitutes inference for certainty. Karl Weick [3] showed how people construct meaning when signals are incomplete. When clarity is weak, interpretation rushes in.

And interpretation is where drift begins. This is where the robot colleague thought experiment becomes practical. If we would not tell an AI agent “check the pump soon,” why would we tell a human that?

What does “soon” mean?

Ten minutes?

This shift?

Before failure?

A machine demands precision because it cannot safely guess.

Humans often guess too much. That may be the deeper lesson prompt engineering exposes. The same structure we now use to communicate properly with machines can improve communication between people. State the condition. Define the task. Clarify the expected result. Confirm understanding.

That is not new digital wisdom. That is disciplined communication. Maintenance has always practiced forms of prompt engineering. It simply did not call them prompts. And when communication loses structure, instructions can become like stray fields—energy exists, but direction is lost. That is where reliability starts to drift.

So Let’s see how PMI [4] formalized some communication principles humans already use when work is done well.

1. CREATE: Context Creates Better Responses, same in Maintenance

Guides comprehensive prompt creation, ensuring all essential aspects are addressed. That is not artificial intelligence thinking. That is disciplined human communication.

Character: Clearly define the role of the project manager or AI in the prompt.

Request: Specify the task or objective that needs to be fulfilled by the AI.

Examples: Provide relevant examples or references to guide the AI in completing the task.

Adjustments and constraints: Outline any adjustments or additional requirements considering

constraints.

Types of output: Specify the format or type of output expected from the AI.

Evaluation and steps: Define criteria for success and break down the task into actionable steps

for the AI.

A clear work order often follows the same logic. So does a good task procedure or SOP. CREATE simply gives familiar practice a formal structure.

CREATE and the Work Order Were Never Far Apart

Character: Define Who Should Perform the Work

In prompt engineering, Character defines the role the AI should assume while answering you.

A work order often does the same. It may not say “character,” but it defines the needed qualification. As Electrical technician, Vibration analyst, Certified welder or, Instrument specialist.

That is role definition. It tells the system who should respond. And this raises a useful question: If we specify the right competence for a machine, why would we issue human work without equal clarity on who is qualified to perform it?

Request: Define the Task Clearly

The Request in CREATE specifies the task or objective.

A good work order does exactly this.

Not: Check pump.

But: Inspect pump seal leakage, verify coupling alignment, and record operating condition.

First one is vague while second one is actionable.

Humans and machines both perform better when the task is explicit.

Examples and Constraints: The Procedure Lives Here

CREATE uses examples, references, and constraints to guide execution.

Maintenance procedures do this through: job steps, drawings, standards, tolerances, lockout requirements, and safety limits. These are not extras. Actually, they prevent improvisation where precision is required.

This is where many communication bridges fail. The task may be stated, but the constraints are left assumed. That is where drift enters.

Types of Output: Define What “Done” Looks Like

Prompt engineering asks what output is expected. Table? Summary? Risk register?

A work order should do the same. What is the expected output?: Torque values recorded, bearing temperature within limit, alignment within tolerance or, an inspection checklist completed.

This is measurable success. Without defining output, “task completed” can mean different things to different people. And that is not clarity.

Evaluation and Steps: Reliability Requires Verification

CREATE includes evaluation criteria and actionable steps.

That may be the strongest link to maintenance. Because this is: handover procedure sequence, self-checks, peer verification, completion criteria i.e. confirmation the task was done right. The task is not complete because someone says so but it is complete because success can be verified.

Seen this way, CREATE does not look foreign to maintenance at all, it looks familiar. That is not just prompt engineering. That is a disciplined work order. And perhaps the lesson is simple: If this level of clarity improves communication with machines, why should humans accept anything less?

2. SMART, The long-used goal-setting strategy

Ensures project objectives are specific and measurable, guiding project success

Specific: Clearly state the specific objective or goal for the AI to achieve.

Measurable: Define how the AI’s progress or output will be measured.

Achievable: Ensure the task assigned to the AI is realistic and feasible.

Relevant: Align the AI’s task with the project’s overall goals and objectives.

Time-bound: Set a deadline or timeline for the AI to complete the task.

The SMART goal-setting strategy is known to be originated with George T. Doran in 1981 when he published “There’s a S.M.A.R.T. Way to Write Management’s Goals and Objectives” in the November 1981 issue of Management Review* (AMAFOR – American Management Association publication). So, it is long known and used by maintenance so we are not going to focus on it here.

3. ABCD, realize the previous and upcoming context of the event

Analyzes project-related behaviors and decisions, aiding in problem-solving

Antecedent: Describe the event or circumstance preceding the prompt for the AI’s understanding.

Behavior: Specify the actions or behavior the AI should exhibit in response.

Consequences: Explain the potential consequences or outcomes of the AI’s behavior.

Decision: Describe the decision-making process the AI should follow.

ABCD and Maintenance Response Thinking

Antecedent: Start with the Condition. It defines the event or condition that exists before action is taken. Maintenance teams begin here all the time: High vibration alarm, Seal leakage observed, Breaker trip after motor start or, Pressure drops below operating range and many more everyday incidents in daily work routines.

This is not yet the action, it is the condition that frames the action. A good work order or operator report should state this clearly, because poor response often begins when the initiating condition is vague.

If the antecedent is misunderstood, everything downstream may drift.

Behavior: Define the Required Response

Behavior in ABCD specifies what action should follow. This aligns with response instructions: Inspect, Isolate, Verify, Restart under controlled conditions, Escalate, etc.

Again, vague language can weaken execution:

“Watch the pump.” Does that mean monitor trends?, Stand by it?, Inspect hourly?,

Behavior should not depend on guesswork. Whether speaking to a machine or a human, the response should be explicit.

Consequences: Make Risk Visible

This is where ABCD becomes powerful. Consequences force the communicator to make outcomes visible. Because it tells explicitly: If action is missed, what happens? e.g. Seal damage may escalate, Safety exposure may increase, Downtime may extend, Cyber controls may weaken, etc.

Maintenance often carries this knowledge informally while ABCD formula/protocol makes it explicit because explicit consequences change behavior. Now, people will respond differently when they understand what is at stake. That’s why we exposed for machines because machines also need those boundaries.

Decision: Define How Judgment Should Be Made

Decision is not just “choose”, it defines the logic for choosing e.g. Continue operating if vibration remains below alarm limit, Shut down if temperature exceeds threshold, Escalate to engineering if root cause remains unknown; That is decision structure.

Maintenance uses this in troubleshooting trees, alarm logic, and emergency response.

ABCD simply makes it visible.

Why This Matters

Seen through a maintenance lens, ABCD and other PROMPT formulas resembles how reliable response should already be framed. That is not a prompt trick so you nudge AI to work better for you. That is disciplined problem-solving.

The thought experiment

The thought experiment of onboarding a robot colleague points back to something maintenance teams have long understood. Safety comes first. Capability is validated through guided work. Trust grows through pilot assignments. Burdens are shared according to proven strengths. Expectations are aligned before autonomy expands.

These are not futuristic ideas. They are mature human practices.

What changes with a robot colleague is not the principle, but the visibility of the principle. Communicating with a machine forces us to make explicit what people often leave implied.

And perhaps that is the larger lesson. The discipline needed to onboard a reliable robot colleague may be the same discipline that improves how humans onboard, collaborate, and support one another.

In that sense, the robot is not replacing the team. It is revealing how the team can communicate better.

And if communication is the bridge between different realms of thinking, better onboarding may be where that bridge is first built.

Must-Know Jargon

Prompt Engineering: Prompt engineering is the practice of structuring instructions so an AI system can respond reliably. It focuses on clarity, context, constraints, and expected outcomes. In this article, it also serves as a mirror for improving human communication.

Ambiguity: Ambiguity exists when words allow multiple interpretations. In maintenance, ambiguous instructions can create execution drift, safety risk, or inconsistent results. Clarity reduces ambiguity.

Hallucination: In AI, hallucination means generating responses that sound plausible but are unsupported or incorrect. In human terms, a rough parallel occurs when people fill gaps with assumptions. Both can lead to error if left unchecked.

Validation Check: A validation check confirms that information, decisions, or completed work meet expected standards. Examples include peer review, repeat-backs, sign-offs, and independent verification. It is a barrier against drift.

Execution Drift: Execution drift happens when work gradually moves away from the intended method or standard. It often begins with unclear instructions, assumptions, or weak feedback loops. Small drift can grow into major failure.

Shared Mental Model: A shared mental model means team members hold a common understanding of conditions, priorities, and expected actions. It improves coordination, handoffs, and response under pressure. Reliable teams depend on it.

Onboarding: Onboarding is the structured process of integrating a new person, or potentially a new autonomous system, into safe and effective work. It includes boundaries, capability testing, trust-building, and expectation setting. Good onboarding strengthens both safety and collaboration.

References

1- Hannover Messe. 20-24 April, 2026. From Physical AI to Humanoid Robots: Bringing Industrial Autonomy to Life. Panel featuring speakers from NVIDIA, Microsoft, BMW, and Hexagon, Hannover Messe 2026. https://www.hannovermesse.de/event/bringing-humanoids-to-production/pan/92704,https://www.nvidia.com/en-us/events/hannover-messe/?utm_source=chatgpt.com

2- D. Kahneman. Thinking, fast and slow. Penguin Books Ltd., London, 2011, A Research Paper about this book: https://decisive-workshop.dbvis.de/wp-content/uploads/2017/09/0116-paper.pdf

3- Czarniawska, Barbara. (1997). Sensemaking in organizations: by Karl E. Weick (Thousand Oaks, CA: Sage Publications, 1995), 231 pp.. Scandinavian Journal of Management. 13. 113–116. 10.1016/S0956-5221(97)86666-3. , https://www.researchgate.net/publication/257397559_Sensemaking_in_organizations_by_Karl_E_Weick_Thousand_Oaks_CA_Sage_Publications_1995_231_pp

4- Talking to AI: Prompt Engineering for Project Managers (Workbook), Project Management Institute, 2024, https://www.pmi.org/shop/p-/elearning/talking-to-ai-prompt-engineering-for-project-managers/el128

Related Articles

Weatherproofing and Ingress Protection in Mobile Control Components

Unbalanced Spinning Equipment

Making These 8 Simple Changes in Your CMMS Will Give You Surprising Results