What is More Important – the Digital Tool or its Users

Ahmed Rezika, SimpleWays OU

Posted 3/3/2026

In the modern industrial landscape, the creation of a digital tool often represents a significant investment of time, expertise, and financial resources. Teams of developers, product managers, and subject matter experts work diligently to design solutions that promise efficiency, visibility, and control. The result is frequently a technically impressive product—robust in architecture, rich in features, and aligned with organizational strategy. Yet, despite these efforts, many digital tools fail to deliver their intended value. Not because they lack capability, but because they lack acceptance.

The paradox of digital transformation is that technical excellence alone does not guarantee operational impact. A digital tool does not create value in isolation; it creates value only when it is meaningfully adopted and integrated into the daily behaviors of its users. When organizations focus disproportionately on the tool itself—its features, performance, or technical sophistication—they risk overlooking the most critical variable in the equation: human engagement. Without genuine user buy-in, even the most advanced system becomes shelfware—present, but powerless.

This raises a fundamental question: where should effort be directed when developing a digital tool? The answer is not to reduce technical rigor, but to rebalance priorities. Effort must be equally—if not primarily—invested in understanding the users: their motivations, their fears, their habits, and their workflows. Adoption is not an event that happens after deployment; it is the outcome of decisions made at every stage of design and development. When users recognize their realities reflected in the tool, they become participants in its success rather than subjects of its imposition.

Demystifying the effort behind building digital tools requires shifting the narrative. Success is not defined solely by delivering a functional system, but by enabling a behavioral transition. The true measure of a digital tool lies not in what it can do, but in what its users choose to do with it. This perspective builds naturally on the themes explored in my previous article, The New Toolkit: Why Maintenance Digital Skills Can’t Be Outsourced, where digital capability was framed not as an external deliverable, but as an internal, human-centered competence. This article extends that discussion by examining how Software and maintenance projects evolve and the the psychological forces that determine whether digital tools are embraced—or quietly resisted.

From Concept to Rollout: A User-Aligned Blueprint for Digital Tool Success

At its core, digital tool development is not a single event but a process of deliberate stages — each with distinct goals, risks, and opportunities for user alignment. The journey begins with problem framing and hypothesis definition. Rather than starting with a fully formed solution in mind, high-performing teams define the problem space and articulate testable assumptions about user needs, workflows, and outcomes. This stage is critical because it sets the trajectory for the entire project. Without a well-defined problem — grounded in user research — the team risks building a tool that solves the wrong problem.

Once the problem is framed, teams typically move into Minimum Viable Product (MVP) development. An MVP is not a “half-finished product,” but the smallest set of features that can be tested with real users to validate core hypothesesabout value and behavior. This approach — central to Lean Startup methodology — helps avoid overbuilding by focusing investment only on features that users truly need and adopt. An MVP enables learning about real user behavior with minimal time and resource investment, reducing both technical waste and organizational risk.

In practice, many successful products have followed this pattern:

- Dropbox began as a simple file-sync MVP before scaling into a full platform, refining its road map based on whether people regularly used and recommended the basic syncing feature [1].

- Spotify structured its early development around iterative sprints, delivering incremental features, measuring how users engaged with them, and adjusting priorities accordingly.

These examples demonstrate that MVPs are not limited to startups — they are a strategic tool for digital innovation at any scale.

After the MVP is released to a controlled audience, development enters the pilot or proof-of-concept (POC) phase. Here, the focus shifts from technical readiness to organizational adoption. Early adopters interact with the system in real operational environments, offering qualitative feedback (user interviews, usability testing) and quantitative data (analytics, feature engagement metrics). This feedback loop is invaluable: it surfaces real pain points that may not have appeared in internal usability tests, such as timing mismatches, workflow contradictions, or feature overload.

Critically, digital tools must evolve not only functionally, but also organizationally. Feedback often reveals gaps that are not about the code — but about training, incentives, cultural practices, and decision authority. A tool that is functionally excellent but organizationally misaligned will struggle to attain sustained use.

Successful development teams institutionalize continuous tuning through short iterative cycles:

- Measure — collect user engagement metrics and direct feedback.

- Analyze — identify patterns in adoption barriers.

- Prioritize — decide what to fix, iterate, or pivot.

- Integrate — incorporate both functional enhancements and training or rollout adjustments.

- Deploy & Validate — release changes to users and continue capturing results.

This loop mirrors Agile and Lean product disciplines widely used in modern software organizations, where the cadence of delivery and feedback is frequent, measurable, and user-centered.

A modern illustration of this principle is the rapid evolution of generative AI platforms like OpenAI’s ChatGPT ecosystem [2]. Rather than launching a fully featured system and declaring victory, OpenAI released earlier versions (e.g., ChatGPT based on GPT-3.5) and used real-world usage to guide subsequent development of GPT-4o and GPT-5, including improvements driven by user behavior and external evaluations. This iterative approach enabled OpenAI to refine the model’s reliability, usefulness, and safety in production, using live signals from millions of users — a testament to how continuous, user-informed iteration at scale drives real adoption and impact.

Across industries, this pattern holds: tools that launch narrowly and adapt broadly outperform tools that launch broadly and stagnate. And the reason is simple — they center user learning loops as part of development, rather than treating users as passive recipients after the fact. By creating pilots, MVPs, and feedback-driven sprints, organizations ensure that the digital tool evolves in lockstep with user needs, increasing both adoption rates and realized value.

Building a Digital Tool Is Like Executing a Major Maintenance Project

Developing a digital tool is often treated as an abstract, technical endeavor—lines of code, sprints, and feature releases. Yet for those in maintenance and asset-intensive industries, a far more intuitive analogy exists: building a digital tool is remarkably similar to planning and executing a major maintenance project. The same principles that govern a successful plant turnaround, fleet modernization, or reliability transformation [3] also determine whether a digital tool thrives or fails.

The difference is not in the discipline. It is in the medium.

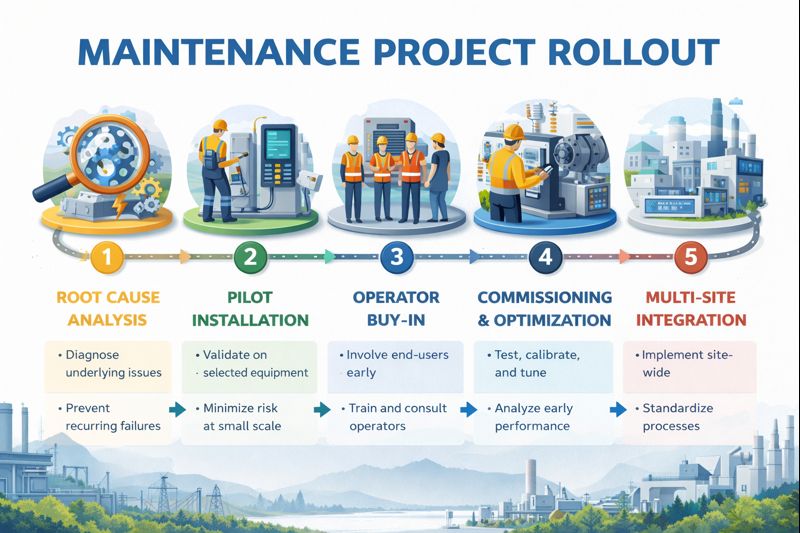

1. Problem Definition: Root Cause Before Replacement

In maintenance, replacing a component without diagnosing the root cause is a costly mistake. A failed pump may not be the problem; cavitation, poor lubrication practices, or misalignment might be the real issue.

Organizations such as Toyota built their reliability excellence on structured root cause analysis and continuous improvement under the Toyota Production System. The principle is simple: solve the real problem, not the visible symptom.

Digital tools follow the same rule.

If the perceived issue is “technicians don’t enter data,” the real cause might be:

- The interface adds 10 minutes per job.

- The data fields don’t reflect real workflows.

- The system does not generate visible value for the technician.

Building features without diagnosing behavioral root causes is equivalent to replacing equipment without failure analysis. Both create motion without progress.

2. The MVP Is the Pilot Installation

In large-scale maintenance upgrades—such as predictive maintenance deployments—organizations rarely convert an entire plant at once. Instead, they pilot on a subset of critical assets.

For example, General Electric introduced predictive maintenance solutions through phased pilots before scaling industrial IoT across global assets. Sensors were deployed selectively, results measured, reliability validated—then expanded.

This mirrors the Minimum Viable Product (MVP) in digital development.

An MVP is not incomplete work; it is a controlled pilot. It answers:

- Does this tool solve a real operational problem?

- Do users naturally integrate it into their routine?

- Is there measurable improvement?

Rolling out a full digital suite across all maintenance crews without a pilot is the equivalent of retrofitting an entire facility with new equipment without testing one production line first.

It is not ambition. It is risk exposure.

3. User Buy-In Is Operator Acceptance

In maintenance projects, no matter how technically sound an upgrade is, it will fail if operators and technicians reject it.

Digital tools require the same operator validation.

If a technician perceives the tool as:

- Surveillance rather than support,

- Extra workload rather than simplification,

- Management’s idea rather than a field solution,

adoption will stall—not through rebellion, but through passive underuse.

In both maintenance and digital projects, acceptance is engineered, not assumed.

4. Commissioning and Continuous Tuning

When new equipment is installed, commissioning does not end at mechanical completion. There is calibration, fine-tuning, load testing, and performance monitoring. Early vibration readings, temperature trends, and operational data guide adjustments.

Similarly, digital tools require post-deployment commissioning:

- Feature usage analytics

- User behavior monitoring

- Feedback loops

- Iterative updates

A strong example of continuous iteration at scale is OpenAI with its ChatGPT releases. Early versions were deployed broadly but iteratively refined based on real-world usage patterns, user feedback, safety evaluations, and performance data. The system evolved through staged releases rather than a single static launch. Continuous tuning—technical and behavioral—drove sustained adoption.

This is no different than condition-based maintenance: measure → analyze → adjust → validate → repeat.

5. Scaling: From Local Success to Systemic Integration

Scaling a maintenance initiative—whether Total Productive Maintenance (TPM), predictive analytics, or reliability-centered maintenance—requires more than replication. It demands training, governance structures, leadership alignment, and cultural reinforcement.

To successfully scale digital industrial platforms combine technological capability with structured change management and capability development across sites. The lesson is consistent: scaling requires organizational readiness, not just technical readiness.

Digital tools behave the same way.

A solution that works for one department may fail at enterprise scale unless:

- Workflows are standardized,

- Training is embedded,

- Feedback remains continuous,

- Leadership reinforces usage expectations.

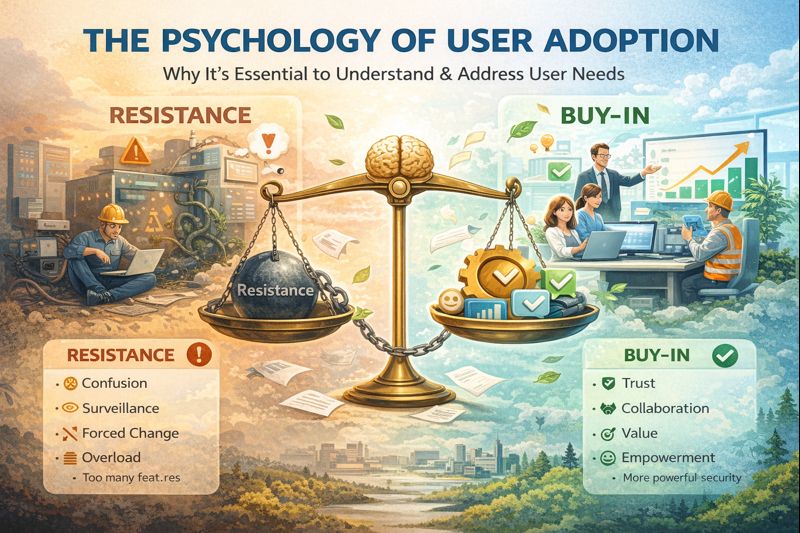

The Psychology of Adoption: Understanding Silent Resistance

Resistance to digital tools is rarely loud or explicit. More often, it manifests as subtle avoidance, minimal compliance, or superficial engagement. From a psychological perspective, this “silent friction” reflects a misalignment between the tool and the user’s internal model of work, identity, and competence.

The Swiss psychiatrist Carl Jung emphasized that human behavior is shaped not only by conscious decisions but also by unconscious patterns, habits, and archetypes. In works such as Modern Man in Search of a Soul and Man and His Symbols [4], Jung described how individuals instinctively resist forces that threaten their sense of autonomy, mastery, or identity. When a digital tool disrupts established workflows without acknowledging the user’s experience and expertise, it can be perceived—consciously or unconsciously—as a loss of agency. This triggers protective behaviors, not because users reject progress, but because they seek psychological safety and continuity.

In organizational contexts, this resistance is often misinterpreted as stubbornness or lack of digital maturity. In reality, it is frequently a signal of unmet emotional and cognitive needs. Users must not only understand how to use a tool; they must understand how the tool supports their competence, reinforces their value, and respects their existing knowledge. When tools are introduced without this alignment, friction emerges—not as open rebellion, but as disengagement.

Similarly, the research of Brené Brown highlights the central role of trust, ownership, and emotional safety in human engagement. In Dare to Lead [5], Brown explains that people support what they help create. When individuals feel excluded from the creation process, they are far less likely to invest emotionally in the outcome. Conversely, when users are involved early—when their input shapes the tool—they develop a sense of ownership that transforms adoption from obligation into commitment.

Brown also emphasizes that disengagement is often a protective response to vulnerability. Learning a new digital tool requires users to temporarily move from competence to uncertainty. Without an environment that normalizes learning and respects this transition, users may protect their professional identity by minimizing engagement. This is not a failure of training—it is a failure of psychological integration.

For digital tool developers and organizational leaders, the implication is clear: adoption is not driven by functionality alone, but by alignment with human psychology. Tools must be designed not only for efficiency, but for trust. Not only for capability, but for confidence. And not only for implementation, but for belonging.

When users see themselves in the tool—when it reflects their needs, respects their expertise, and supports their growth—resistance dissolves naturally. The tool ceases to be an external imposition and becomes an extension of their professional identity. At that point, the question of whether the tool or the user is more important becomes irrelevant. The two become inseparable components of the same system—one enabling, the other activating its value.

The Core Analogy: Tools Don’t Create Reliability—People Do

A new compressor does not create reliability unless it is properly installed, operated, and maintained.

A new digital tool does not create efficiency unless it is properly designed, adopted, and integrated.

In both domains:

- Technology is an enabler.

- Human behavior is the multiplier.

- Alignment determines return on investment.

The organizations that succeed in maintenance excellence understand that projects are not about equipment—they are about systems of people, process, and technology working together [6].

The same truth applies to digital development.

The question is no longer whether the digital tool or the user is more important. The analogy makes the answer clear: a tool without engaged users is like machinery without operators. It may exist. It may even be impressive. But it will never deliver its intended value.

Must-Know Jargon

Minimum Viable Product (MVP): An MVP is the smallest functional version of a digital tool that delivers core value and allows real users to test it in operational conditions. Its purpose is not to launch a reduced product, but to validate assumptions, gather feedback, and minimize wasted development effort before scaling.

Proof of Concept (POC): A POC is a limited experiment designed to confirm that a technical idea or approach is feasible. Unlike an MVP, which focuses on user value, a POC primarily tests whether a specific technology or integration can work under defined conditions.

Pilot Rollout: A pilot rollout is a controlled deployment of a digital tool within a specific team, site, or user group before full-scale implementation. It reduces risk by exposing the tool to real workflows, uncovering friction points, and validating organizational readiness before enterprise expansion.

User Adoption: User adoption refers to the degree to which intended users integrate a digital tool into their daily routines and rely on it consistently. Adoption goes beyond access or installation—it measures behavioral change, engagement, and sustained use.

Change Management: Change management is the structured approach to preparing, supporting, and guiding individuals through organizational change. In digital projects, it includes communication, training, stakeholder alignment, and leadership reinforcement to ensure the tool becomes embedded in practice—not just deployed technically.

Continuous Improvement (Iterative Development): Continuous improvement is the ongoing refinement of a digital tool based on user feedback, performance metrics, and operational data. Instead of treating launch as the finish line, iterative development recognizes that long-term success depends on constant tuning—both functionally and organizationally.

User-Centered Design (UCD): User-centered design is a development philosophy that prioritizes understanding user needs, behaviors, and constraints at every stage of product creation. It ensures the tool fits into real workflows rather than forcing users to adapt to poorly aligned systems.

References

1-Houston, D. (2007). Dropbox MVP Demo and Validation Case Study. Available at:

https://www.shortform.com/blog/dropbox-mvp-explainer-video/

2-OpenAI. (2023). GPT-4 Technical Report. OpenAI., https://openai.com/index/gpt-4-research/

3- IDCON Inc., Idhammar, T. (2023). Discover Your True Potential through Reliability and Maintenance Assessments.

4- Eternalised, June 2021, Book Review: Man and His Symbols – Carl Jung, https://eternalisedofficial.com/2021/06/05/book-review-man-and-his-symbols/

5- PARENTOTHECA, Irina, May 2024,Dare To Lead by Brené Brown – Book Summary, Notes and Quotes, https://parentotheca.com/2024/05/07/dare-to-lead-brene-brown-book-summary/

6- Maintenance World Magazine, Ahmed Rezika, January 6, 2026, The New Toolkit: Why Maintenance Digital Skills Can’t Be Outsourced

Ahmed Rezika

Ahmed Rezika is a seasoned Projects and Maintenance Manager with over 25 years of hands-on experience across steel, cement, and food industries. A certified PMP, MMP, and CMRP(2016-2024) professional, he has successfully led both greenfield and upgrade projects while implementing innovative maintenance strategies. As the founder of SimpleWays OU (2019-2026), Ahmed is dedicated to creating better-managed, value-adding work environments and making AI and digital technologies accessible to maintenance teams. His mission is to empower maintenance professionals through training and coaching, helping organizations build more effective and sustainable maintenance practices.

Related Articles

Protection of Equipment During Storage, Standby and Decommissioning

Gear Pump Operation and Maintenance

Practical Automation: Understanding Pneumatic Power Circuits

How to Extend Bearing Life

Spooky Sounds in the Haunted Factory

Hydraulic System FMEA Made Easy